Proficiency in the operating room: how Formula 1 principles could help surgical teams implement advanced medical technology

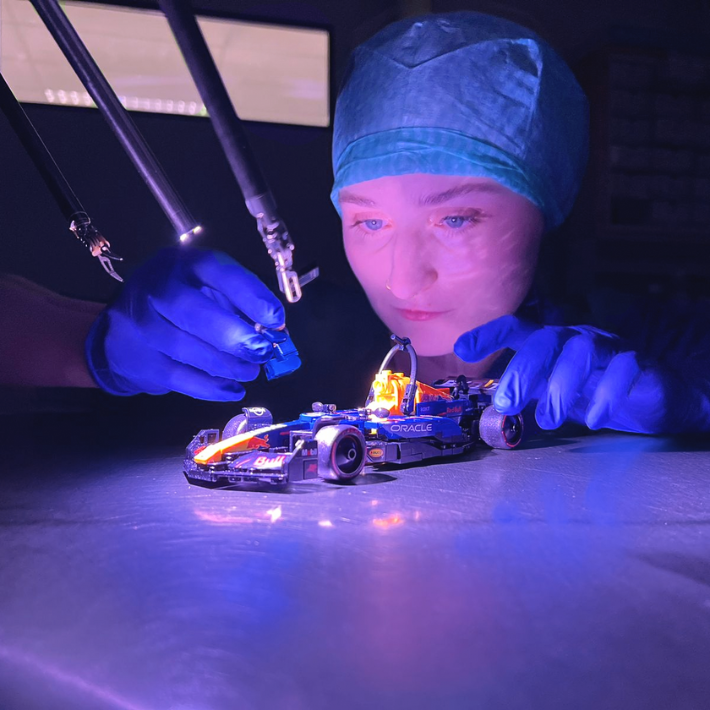

Kateryna Pirkovets in the OR

&width=710&height=710)

Data processing in the operating room: the Formula 1 approach

In Formula 1, everything revolves around data. Not only are position in the race, track times, corner speeds etc. recorded, also hundreds of sensors in the car continuously measure things like steering, tyre wear and brake temperature. Combined data that is sent directly to the engineers, who can process it and can intervene during the race or adjust the strategy- for instance by changing tyres earlier. After the race, the fundamental aspects that define performance are analyzed in detail to improve the car performance and tactics for the next time.

But Formula 1 is not just about the driver. Behind every lap is a coordinated effort involving race engineers, data analysts, strategists, mechanics and technicians, each with a specific role in optimizing performance. Surgery, too, is a team effort. The procedure depends on seamless collaboration with surgeons, surgical assistants, anesthesiologists, technical staff, researchers and nursing teams. Inspired by the telemetry systems used in Formula 1, researchers at the LUMC are now adding data-driven decision support to an already well-coordinated surgical team — introducing a new layer of insight to further improve performance.

Smart software recognizes surgical phases

How does this work? During telerobotic surgery, the surgeon doesn’t stand next to then patient but sits behind a console that controls the movements of the robot. The robot has four arms (3 surgical instruments and 1 camera). It’s operated using foot pedals and hand controls. Thanks to a collaboration with the robot’s manufacturer, the researchers were able to continuously record all the arm movements in three dimensions (x, y and z coordinates). Thereby providing unique datasets that can potentially help unravel the fundamental aspects of surgical performance.

PhD student Kateryna Pirkovets explains: “We collected data from four surgeries in which the prostate was removed using the Da Vinci robot, also known as RALP: Robotic Assisted Laparoscopic Prostatectomy. Each procedure lasted around three hours, during which data for 44 different parameters was measured. We looked at how fast and smoothly the instruments moved, their location within the body and relative to each other, and how long each step took. We used artificial intelligence to process and help interpret the data.”

“Driving skills” in the operating room

Just like a Formula 1 track has corners, straights and chicanes, surgical procedures such as RALP unfold in distinct phases. Each phase places different demands on the surgeon technique: sometimes speed is key, other times precision is crucial. Think of Max accelerating on a straight or braking before a sharp turn to achieve the fastest and most consistent lap times. Surgeons also need to adjust their approach depending on the phase of the surgery. Preserving nerve structures requires slow, refined movements, while stitching calls for circular motions.

The researchers found that these different surgical task for different phases are reflected in the instrument movements. Pirkovets: “By recognizing movement patterns, we can now, for the first time, objectively determine which phase of the operation the surgeon is in, much like a F1 team follows the car’s position on the track.”

Achieving peak performance

“This matters because every step counts in cancer surgery. Until now, the quality of three-hour long RALP surgeries was assessed by colleagues reviewing the video afterwards. A process that is time consuming and is prone to human errors. Automating this process not only makes it faster and more reproducible. It also provides a higher level of feedback that helps surgeons learn and evolve, just like F1 teams better their skills after each race,” says Fijs van Leeuwen, professor in molecular imaging and image-guided therapy.

Surgical trainees also benefit from this technique. Pirkovets: “The feedback is data driven and objective. In another study, we followed trainees homing their surgical skills in a dedicated training center. In the first attempts, errors sometimes occurred. The second time, we saw that the same trainee -after having received feedback from the trainer- displayed more cautious instrument movements. Thus, showing that movement analysis can also be used to reflect the trainees learning curve and give detailed feedback on aspects of their skill that can be improved further.”

Shifting up a gear

The initial results are promising. The researchers are now working towards a system that, in the future, not only analyses surgical data afterwards but also provides actionable feedback during the procedure. Just as a Formula 1 driver receives updates on track position, corner speed or tyre pressure during the race, the aim is for surgical teams to eventually get real-time information to sharpen decision-making while operating and produce “wins”.

Van Leeuwen: “To analyze this huge amount of data at the required speed, we need serious computing power. For this we need supercomputers similar to the ones that help Max to improve his lap time. We are partnering not only with medtech companies like Intuitive and Barco, but also data-processing experts like Capgemini and Oracle, the latter also known for their work in Formula 1. The goal? Not just learning to interpret data afterwards, but to assure that in the future every surgical team, like a Formula 1 team, can rely on smart technology to support them in delivering the best possible patient care.”

Want to know more? Watch the video ‘F1 Tactics in robot-assisted surgery’, made by Pirkovets, or read the scientific publication.

- Kateryna Pirkovets and Fijs van Leeuwen both work at the Laboratory for Interventional Molecular Imaging at LUMC and the Department of Urology at the Netherlands Cancer Institute – Antoni van Leeuwenhoek.

- This research was financed by the Dutch Research Council (NOW) KIC grant SurgiSense (KICH1.ST03.210.030)

&width=710&height=710)